2010/08/26

Track Built on Open Specifications

The Google Buzz team just rolled out the Track feature, which lets you subscribe to real time updates for search terms via PubSubHubbub. It's pretty awesome, and builds on top of the work we've done for the Buzz Firehose. A search query is just like any other URL -- say, https://www.googleapis.com/buzz/v1/activities/track?q=coffee+OR+tea -- and you can subscribe to it with PubSubHubbub using existing code. In fact that URL will work in any PubSubHubbub-enabled client (like Google Reader).

Experimental Magic Signatures Draft

|

| picture via oskay |

This morning, I put up an experimental Magic Signatures draft for comment. This is a fairly extensive reorganization of the spec, so it would eventually become an -01 version of the Magic Signatures specification. It is still provisional and changing and it really needs feedback (including implementation feedback). It adds two optional pieces, and solves a bunch of unspecified things, and is organized so as to make it easier to use as a building block in specifications other than Salmon.

The major additions are:

- HMAC private key signatures

- Ability for signer URLs to provide signer keys on GET directly

- A JSON based format for signer keys

The major (possibly breaking) change from the prior version is that public keys retrieved via XRD are retrieved via XRD Property elements rather than Link (since all current applications are using inline keys in any case, and this is more parallel to the new JSON format).

Looking forward to feedback on this experiment. If it's successful, we'll rev both Magic Signatures and tweak Salmon to incorporate it.

2010/07/29

We Built this Firehose on Open Specs

| photo CC joevans |

We did do some special things on the supply side for efficiency. If you GET the firehose feed you'll see that it never contains any updates, just feed metadata and the hub link. Conceptually, it contains the last 0 seconds of updates. This is primarily because it doesn't make sense to poll this feed, so it exists only to provide a subscription mechanism. Internally, for efficiency we're pushing new updates to the hub with full data rather than pinging the hub and waiting for it to poll the feed. But, we're using the standard Atom format to push the data. The hub is doing coalescing of updates so when a bunch come in over a small period of time, as with the firehose, it will send them in batch every few hundred msecs to each subscriber. So the POSTs you get for the firehose will almost always contain a feed with multiple entries in it -- whatever has come in since the last time you've received an update. There are also some tweaks to the hub retry configuration; if your server doesn't keep up with the firehose the hub will back off fairly quickly and won't attempt many retries.

2010/06/06

XAuth is a Lot Like Democracy

XAuth is a lot like democracy: The worst form of user identity prefs, except for all those others that have been tried (apologies to Churchill). I've just read Eran's rather overblown "XAuth - a Terrible, Horrible, No Good, Very Bad Idea", and I see that the same objections are being tossed around; I'm going to rebut them here to save time in the future.

Let's take this from the top. XAuth is a proposal to let browsers remember that sites have registered themselves as a user's identity provider and let other sites know if the user has a session at that site. In other words, it has the same information as proprietary solutions that already exist, except that it works across multiple identity providers. It means that when you go to a new website, it doesn't have to ask you what your preferred services are, it can just look them up. Note that this only tells the site that you have an account with Google or Yahoo or Facebook or Twitter, not what the account is or any private information.

Some objections to all of this are natural; I had them too. Below are my answers (note: I'm not speaking for Google here, just myself, but I believe these answers are consistent with what people like Chris Messina and Joseph Smarr are thinking).

Let's take this from the top. XAuth is a proposal to let browsers remember that sites have registered themselves as a user's identity provider and let other sites know if the user has a session at that site. In other words, it has the same information as proprietary solutions that already exist, except that it works across multiple identity providers. It means that when you go to a new website, it doesn't have to ask you what your preferred services are, it can just look them up. Note that this only tells the site that you have an account with Google or Yahoo or Facebook or Twitter, not what the account is or any private information.

Some objections to all of this are natural; I had them too. Below are my answers (note: I'm not speaking for Google here, just myself, but I believe these answers are consistent with what people like Chris Messina and Joseph Smarr are thinking).

- Objection: The implementation relies on a single domain. Answer: The current implementation does this due to restrictions in browser security models. There is no essential reason for that single domain to exist, there is no data stored on the domain's servers and no web services accessed from it. It just stores the JS used to implement the API today. In theory one could write a browser extension that maps "xauth.org" to "127.0.0.1", make sure to serve up the JS from a local web server, and XAuth would work fine. A better use of time is to convince the browser vendors to support XAuth natively. Which leads to objection #2...

- Objection: This should be done by the browsers, not by a back door via a JS API. Answer: Yes, it absolutely should, and the best and fastest way to make that happen is to get a deployed solution and user experience supported by many sites as quickly as possible, then swap out the implementation. It is trivial to replace the XAuth JS core with calls to a browser solution. In fact it'd be great to figure out a good way to make this happen automatically with feature sniffing, though for that we'd need a very stable JS interface. And for that we need... field deployment experience! XAuth is a great bootstrap to a browser centric future solution.

- Objection: But the central domain name should not be managed by a single party, even in the interim: Answer: Yes, that's true, and the parties involved are working to get it managed by a neutral third party who can be trusted not to do something bad. Note that we can know fairly easily if some domain owner starts to do bad things as they'd have to mess around with the publicly visible JS to get information to go anywhere but the users' local computer.

- Objection: It should be opt-in per browser, not opt-out. Answer: Note that XAuth doesn't prevent opt-in semantics on the part of identity providers, it just doesn't enforce it. All other things being equal, yes, it would be better to force the default to "off" and ensure users understand what they're doing when they turn it "on". The last bit is hard; a choice that users don't understand is even worse; it doesn't really solve anything but hurts adoption -- and gives proprietary alternatives, which don't require opt-in, a big advantage. Again, a browser-native alternative may be able to solve this. An XAuth ecosystem, already in place, ready for browsers to use, is a powerful incentive for them to do this.

Eran writes: "This is a misguided attempt to solve a problem browser vendors have failed to address. It is true that getting browser vendors to care about identity and innovate in the space is a huge challenge, but solving it with a server-hosted, centralized solution goes against everything the distributed identity movement has tried to accomplish over the past few years." (Emphasis mine.) It is precisely my hope that XAuth will help bootstrap the ultimate goals of the distributed identity movement, and that it will actually accomplish things in a short time frame while setting the environment up for a better solution in the future.

[Updated: You may want to refer to the original announcement of XAuth back in April, where many of these objections have already been answered.]

[Updated: You may want to refer to the original announcement of XAuth back in April, where many of these objections have already been answered.]

2010/05/27

Google I/O, Salmon, and the Open Web

Last week was intense, and I'm just now coming up from air from the backlog. We launched a huge expansion to the Buzz API at Google I/O. My contribution was to ensure that PubSubHubbub real time updates flow for the new feed URLs as well as the older ones; as part of this, we also enabled "fat pings" to the hub from Buzz. "Fat pings" in this case means that we're doing an active push of each full update from our back end systems through to the hub, so the hub never needs to call back to the feeds to retrieve updates. This is a more complicated approach, but reduces the overall server load and makes consistency guarantees easier for globally distributed systems.

Just before Google I/O, I went to IIW10 and talked about Salmon, LRDD, Webfinger, and OpenID Connect. The air at IIW was thick with new specifications and updates. I would've liked to have participated more, but I was helping to roll out PubSubHubbub for Buzz and getting ready for the "Bridging the Islands" Google I/O talk I was to give with Joseph Smarr the next day.

And then, my laptop crashed. I blame Chris Messina -- Joseph and I were stealing some of his slides, and I was editing his slide deck when the system froze up and refused to boot. Turned out later that accessing that file somehow corrupted the disk encryption driver. That's right, Chris Messina's slide decks are powerful enough to destroy laptops.

Fortunately, we recovered and had a great session (some audience notes here). Here's a screencast of the cross site Salmon mention demonstrated in the session. This shows a user on Cliqset mentioning a couple of buddies using other services (StatusNet and a scrappy startup called Atollia), having information flow between the sites, and having mentions be pushed the other services. The big take-away here is that these services don't need to pre-register or federate with each other any more than they need to federate to send email back and forth; it all Just Works.

Just before Google I/O, I went to IIW10 and talked about Salmon, LRDD, Webfinger, and OpenID Connect. The air at IIW was thick with new specifications and updates. I would've liked to have participated more, but I was helping to roll out PubSubHubbub for Buzz and getting ready for the "Bridging the Islands" Google I/O talk I was to give with Joseph Smarr the next day.

And then, my laptop crashed. I blame Chris Messina -- Joseph and I were stealing some of his slides, and I was editing his slide deck when the system froze up and refused to boot. Turned out later that accessing that file somehow corrupted the disk encryption driver. That's right, Chris Messina's slide decks are powerful enough to destroy laptops.

Fortunately, we recovered and had a great session (some audience notes here). Here's a screencast of the cross site Salmon mention demonstrated in the session. This shows a user on Cliqset mentioning a couple of buddies using other services (StatusNet and a scrappy startup called Atollia), having information flow between the sites, and having mentions be pushed the other services. The big take-away here is that these services don't need to pre-register or federate with each other any more than they need to federate to send email back and forth; it all Just Works.

2010/03/11

Blogger Template Designer in draft!

Awesome new template designer from the Blogger team, now on draft.blogger.com. I've converted this blog to one of the new templates, it's a huge improvement. It's very easy to play around with different looks, the new templates look great, are a lot more on the way. Nice work!

2010/02/25

Salmon Protocol RFC draft Now Available

For the past couple of weeks, I've been banging on code and xml to work out a coherent draft spec for the Salmon Protocol. Although Salmon is intended to primarily be a glue protocol, tying together a bunch of disparate existing pieces into an understandable and coherent framework turns out to be a lot of work. Now starts a slog through a bunch of issues (including a backlog on Magic Signatures which need to be addressed) and updating the demo code and libraries to stay in sync with the spec.

Please join the salmon-protocol group if you're interested in working on the Salmon specification and/or writing code that implements it.

2010/02/11

Webfinger now available for Google public profiles

If you've seen ReadWriteWeb's "Email as Identity", you know that Brad just turned on Webfinger for anyone with a public Google profile. What this means is that, if (and only if) you've enabled your public Google profile at http://www.google.com/profiles/{username}, you can now use Webfinger to look up information you've added to your profile. Here's the one for john.panzer for example. By default this exposes only generic service endpoints, not actual information about the user. But you can link it to private or public feeds, services, or basically anything you want to associate with your online identity.

A next step for Salmon is to let users add a link to their public key from their Webfinger discovered info. Then given an email address it will be trivial to look up their preferred public key. It'd look something like this via the discovery tool:

links {

rel: "magic-public-key"

type: "application/magic-public-key"

href: "data:application/magic-public-key;,RSA.mV...ww.AQAB"

}2010/02/09

Google Buzz and Salmon

You may have heard of a small launch this morning: Google Buzz is being rolled out to all GMail users right now.

There's an obvious connection to the Salmon Protocol; DeWitt just put up a blog post giving the big picture for Buzz and open protocols on the Social Web blog, and technical details on the Google Code blog from Brian Stoler. Most importantly, there's a Labs Buzz API. Here's the take-away:

There's an obvious connection to the Salmon Protocol; DeWitt just put up a blog post giving the big picture for Buzz and open protocols on the Social Web blog, and technical details on the Google Code blog from Brian Stoler. Most importantly, there's a Labs Buzz API. Here's the take-away:

We'd like to take this opportunity to invite developers to join us as we prepare the Google Buzz API for public launch. Our goal is to help create a more social web for everyone, so our plan for the Buzz API is a bit unconventional: we'd like to finalize this work out in the open, and we ask for your participation. By building the Google Buzz API exclusively around freely available and open protocols rather than by inventing new proprietary technologies, we believe that we can work together to build a foundation for generations of sites to come. We're ready to open the doors and share what we've been working on, and we'd like for you to join us in reaching this goal. - Join the ConversationThis is one of the motivations behind Salmon as an open, non-proprietary, standards-based, and interoperable protocol. This goes two ways:

- Since Buzz can pull in feed based data from anywhere, we want Buzz comments and likes on that data to flow back upstream to sources like Flickr and blog posts.

- As services consume the public Buzz streams, we want to make it easy for them to also send salmon back upstream to Buzz as well.

And of course we plan to do this in an way that's decentralized and isotropic, with no most favored site. Buzz is no more central than any other service that talks the necessary protocols. Come join us on the Buzz API Group to talk more about Buzz and how it's leveraging open, decentralized standards.

Magic Signatures

Just wrote up a detailed Internet-Draft for the Salmon signature mechanism; since it's an outgrowth of the "magic security pixie dust" for Salmon I'm calling it Magic Signatures for now. It approximately matches the Magic Sig demo as well. Feedback welcomed!

(Updated: Just changed the text at http://www.salmon-protocol.org/salmon-protocol-summary as well, hopefully it makes a bit more sense now.)

2010/01/29

Salmon's 'Discoverable URIs' and PKI

One issue that's cropped up in Salmon is how to identify authors. Mostly, feed syndication protocols hand-wave about this and at most specify a field for an email address. Atom goes one step further and provides a way to specify a URI for an author, which could be a web site or profile page.

The main things that Salmon needs are (1) a stable, user-controlled identifier that can be used to correlate messages and (2) a way to jump from that identifier to a public key used for signing salmon. The existing atom:author/uri element works fine for #1. Webfinger+LRDD discovery can then take over for #2. What comes out of that process is a public key suitable for verifying the provenance (authorship) of a salmon.

Now for the details. It turns out that the flow for Webfinger is pretty stable, so any time you have an email like identifier for an author you can just slap "acct:" in front and things will Just Work. But I don't want to limit authorship only to acct: URIs - I should be able to use any reasonable URI in the author/uri field and have things work. By this I mean any URI that I can perform the right flavor of XRD discovery on and get out the public key. It turns out that the existing specs cover 90% of this flow, but some things, like the order in which to look for links, remain unspecified. If they remain unspecified by the time Salmon gets real deployment, then Salmon will need to fill in the gaps in its specification. I'm hoping that's not necessary but it won't be a blocker if it is.

Also, there isn't an existing agreed-upon term that covers both Webfinger identifiers and the kind of URIs that you can perform LRDD/XRD discovery on, such as https://myprofile.com/myname. Personally I would prefer to call them all Webfinger IDs but for now I'm calling them all discoverable URIs. Which is a terrible name since, obviously, you don't need to discover the URI as that's what you're starting from. Hopefully that will be motivation to get people to agree on a better name. Also, this is obviously useful for more than just user account identifiers. To start with, automatic services and sites will want to be able to participate as well. For example, did you know that the Blogger service has a public signing key? It would be good to have that published somewhere more discoverable than this blog post.

The main things that Salmon needs are (1) a stable, user-controlled identifier that can be used to correlate messages and (2) a way to jump from that identifier to a public key used for signing salmon. The existing atom:author/uri element works fine for #1. Webfinger+LRDD discovery can then take over for #2. What comes out of that process is a public key suitable for verifying the provenance (authorship) of a salmon.

Now for the details. It turns out that the flow for Webfinger is pretty stable, so any time you have an email like identifier for an author you can just slap "acct:" in front and things will Just Work. But I don't want to limit authorship only to acct: URIs - I should be able to use any reasonable URI in the author/uri field and have things work. By this I mean any URI that I can perform the right flavor of XRD discovery on and get out the public key. It turns out that the existing specs cover 90% of this flow, but some things, like the order in which to look for links, remain unspecified. If they remain unspecified by the time Salmon gets real deployment, then Salmon will need to fill in the gaps in its specification. I'm hoping that's not necessary but it won't be a blocker if it is.

Also, there isn't an existing agreed-upon term that covers both Webfinger identifiers and the kind of URIs that you can perform LRDD/XRD discovery on, such as https://myprofile.com/myname. Personally I would prefer to call them all Webfinger IDs but for now I'm calling them all discoverable URIs. Which is a terrible name since, obviously, you don't need to discover the URI as that's what you're starting from. Hopefully that will be motivation to get people to agree on a better name. Also, this is obviously useful for more than just user account identifiers. To start with, automatic services and sites will want to be able to participate as well. For example, did you know that the Blogger service has a public signing key? It would be good to have that published somewhere more discoverable than this blog post.

2010/01/19

A Web Wide Public Key Infrastructure

In my last post, I talked about Magic Signatures, the evolution of Salmon's Magic Security Pixie Dust into something concrete. There's an important bit missing, which I'll talk about now: Public key signatures are fairly useless without a way to discover and use other people's public keys in a secure, reliable way. We need a distributed, easily deployable public key infrastructure. Let's build one.

The basic plan for Salmon signature verification is fairly simple: Use Webfinger discovery on the author of the Salmon to find their Magic Signature public key, if they have one. To find this, you first get the Webfinger XRD file, then look for a "magickey" link:

A few notes:

The next question is what format that public key should be. The obvious choice is an X.509 PKI certificate PEM file, but this turns out to pull in a ton of baggage; you can't even parse one of these using available App Engine libraries at the moment. This is primarily due to the use of ASN.1 DER encoding, which is usually dealt with via a linked-in C library. X.509 is also inherently complex and overkill for what we need; it's based on a hierarchical model of CAs which doesn't map well to Salmon's decentralized model.

When you dig down into things, it turns out that an RSA public key itself is fairly simple. You have two numbers, a modulus (n, for some reason) and an exponent (e). You need to serialize and deserialize these numbers in a standard way. Most libraries let you pass in and access these values. The only catch is that the numbers themselves can be very, very big -- bigger than any native number on any system, so you need something like a bignum library to deal with these numbers. At the end of the day, though, you're just dealing with a pair of numbers. You don't need complicated formats for that.

So, here's a very simple way to represent this in a web-safe way:

(There might possibly also be a need for a Subject attached to the key itself, to prevent Joe from pointing at Bob's key and claiming it as his own. Joe still couldn't sign things as Bob but he could potentially claim Bob's output as his own. So, add the base64 encoding of Joe's Webfinger ID to the public key. It's not clear to me if this is needed quite yet.)

You may also want to store and retrieve private keys; it turns out the private keys just need one more value, d, along with n and e. Append this to the end as an optional parameter. Thoughts? Anything missing? You can also take a look at the in-progress Python code to deal with this format. It's pretty trivial to write and parse. I imagine that dealing with parsing additional algorithms will be slightly more complicated, but nothing compared with the complication of actually implementing said algorithms.

(All of this still of course relies on X.509 certificates underlying the SSL connections used to retrieve the user-specific public keys. That's fine, because those certificates are hidden underneath existing libraries and don't need to be visible to Salmon code at all.)

This is a general purpose, lightweight discovery mechanism for personal signing keys. It works well for Salmon; once widely deployed, it would be useful for other purposes as well.

(Edited to update thoughts on Subject, which, I think, is not needed.)

The basic plan for Salmon signature verification is fairly simple: Use Webfinger discovery on the author of the Salmon to find their Magic Signature public key, if they have one. To find this, you first get the Webfinger XRD file, then look for a "magickey" link:

<Link rel="http://salmon-protocol.org/ns/magickey" href="https://www.example.org/0B6A58300/publickey" />Retrieve the magickey resource, parse it into a public key in your favorite library, and use that to verify the signature of the original Salmon you started with.

A few notes:

- Retrievals of these documents should be done via TLS and certificates checked; if any step in the chain isn't done via checked TLS, the result may be vulnerable to MITM or DNS poisoning attacks.

- The public key is per-user and is effectively self-signed. While I anticipate most keys will be generated by large providers, it is possible for users to generate their own public/private keypairs and upload only the public key to the Web.

- Maintaining data about expired and revoked keys will be needed too, but first things first.

The next question is what format that public key should be. The obvious choice is an X.509 PKI certificate PEM file, but this turns out to pull in a ton of baggage; you can't even parse one of these using available App Engine libraries at the moment. This is primarily due to the use of ASN.1 DER encoding, which is usually dealt with via a linked-in C library. X.509 is also inherently complex and overkill for what we need; it's based on a hierarchical model of CAs which doesn't map well to Salmon's decentralized model.

When you dig down into things, it turns out that an RSA public key itself is fairly simple. You have two numbers, a modulus (n, for some reason) and an exponent (e). You need to serialize and deserialize these numbers in a standard way. Most libraries let you pass in and access these values. The only catch is that the numbers themselves can be very, very big -- bigger than any native number on any system, so you need something like a bignum library to deal with these numbers. At the end of the day, though, you're just dealing with a pair of numbers. You don't need complicated formats for that.

So, here's a very simple way to represent this in a web-safe way:

"RSA." + urlsafe_b64_encode(to_network_bytes(modulus)) + "." + urlsafe_b64_encode(to_network_bytes(exponent))The output is two base64 encoded strings, separated by a period, and prefixed with the key type:

RSA.mVgY8RN6URBTstndvmUUPb4UZTdwvwmddSKE5z_jvKUEK6yk1u3rrC9yN8k6FilGj9K0eeUPe2hf4Pj-5CmHww==.AQABTo parse this, you split on the period, urlsafe_b64_decode each piece into bytes, and then turn the bytes into bignums using your library. (I'm using the PyCrypto bytes_to_long and long_to_bytes functions to play around with this at the moment.)

(There might possibly also be a need for a Subject attached to the key itself, to prevent Joe from pointing at Bob's key and claiming it as his own. Joe still couldn't sign things as Bob but he could potentially claim Bob's output as his own. So, add the base64 encoding of Joe's Webfinger ID to the public key. It's not clear to me if this is needed quite yet.)

You may also want to store and retrieve private keys; it turns out the private keys just need one more value, d, along with n and e. Append this to the end as an optional parameter. Thoughts? Anything missing? You can also take a look at the in-progress Python code to deal with this format. It's pretty trivial to write and parse. I imagine that dealing with parsing additional algorithms will be slightly more complicated, but nothing compared with the complication of actually implementing said algorithms.

(All of this still of course relies on X.509 certificates underlying the SSL connections used to retrieve the user-specific public keys. That's fine, because those certificates are hidden underneath existing libraries and don't need to be visible to Salmon code at all.)

This is a general purpose, lightweight discovery mechanism for personal signing keys. It works well for Salmon; once widely deployed, it would be useful for other purposes as well.

(Edited to update thoughts on Subject, which, I think, is not needed.)

2010/01/12

Magic Signatures for Salmon

In writing the spec for Salmon we soon discovered that what we really wanted was S/MIME signatures for the Web. In other words, given a message, let you sign it with a private key, and let receivers verify the signature using the corresponding public key. Signing and verifying are pretty well understood, but in practice canonicalizing data and signing is hard to get right. Making sure that the mechanism adopted is really deployable and interoperable, even in restricted environments, is a top priority for Salmon.

I'm calling this the "Magic Signature" mechanism because it's not really Salmon-specific and you can analyze it without thinking much about Salmon at all.

One of the reasons why this is hard is because of the abstraction layers that we have in place in our software. For example, encryption algorithms operate on byte sequences, but a given XML document can have many different byte sequence serialized forms. Even JSON isn't immune to this, though mandating UTF-8 certainly helps. So, the first thing to make really simple is the serialization format. Here's the Magic Signature serialization algorithm:

b64_data = urlsafe_b64_enc(utf8text)

In other words, serialize your data however your libraries let you into utf8 text, then base64 encode the resulting bytes, using the url safe variant of base64. That's the actual string you sign, and it's nearly impossible to mess up that string as it's 7 bit ASCII, uses no characters known to ever be escaped by anything, and is mostly an uninterpreted blob of text as far as your libraries and transport layers are concerned. The one caveat is that some transports may need to insert linebreaks/whitespace due to line length limits -- this can be solved by squeezing out all whitespace (which is never part of the data) before signing or validating.

Signing is then standard; we'll mandate support for RSA_SHA1, meaning you take the SHA1 hash digest of that base64 data and then sign the hash using an RSA private key:

s = rsa_sign(private_key,sha1_digest(b64_data))

the result is a very big integer, which you convert to network-neutral bytes and then turn into a string with, you guessed it, urlsafe_b64_enc:

sig = urlsafe_b64_enc(to_binary(s))

Now for the ugly bit: Since the whole premise of this is that the receiver is not going to be able to create exactly the same serialization of utf8text that the sender did, you need to help the receiver out by sending it the exact b64_data used to compute the original signature. Since it's base64 encoded, it's effectively armored not only against vagaries of transport protocols but also software stacks and frameworks.

Since you're sending the base64 data, and it's trivial to base64-decode it, there's no point in sending the original data as well. So you just send the content, wrapped in its base64 envelope, plus a signature. Call this a "Magic Envelope":

<?xml version='1.0' encoding='UTF-8'?>

<me:env xmlns:me='http://salmon-protocol.org/ns/magic-env'>

<me:data type='application/atom+xml' encoding='base64'>

PD94bWwgdmVyc2lvbj0nMS4wJyBlbmNvZGluZz0nVVRGLTgnPz4KPGVudHJ5IHhtbG5zPSdodHRwOi8vd3d3LnczLm9yZy8yMDA1L0F0b20nPgogIDxpZD50YWc6ZXhhbXBsZS5jb20sMjAwOTpjbXQtMC40NDc3NTcxODwvaWQ-ICAKICA8YXV0aG9yPjxuYW1lPnRlc3RAZXhhbXBsZS5jb208L25hbWU-PHVyaT5hY2N0OmpwYW56ZXJAZ29vZ2xlLmNvbTwvdXJpPjwvYXV0aG9yPgogIDx0aHI6aW4tcmVwbHktdG8geG1sbnM6dGhyPSdodHRwOi8vcHVybC5vcmcvc3luZGljYXRpb24vdGhyZWFkLzEuMCcKICAgICAgcmVmPSd0YWc6YmxvZ2dlci5jb20sMTk5OTpibG9nLTg5MzU5MTM3NDMxMzMxMjczNy5wb3N0LTM4NjE2NjMyNTg1Mzg4NTc5NTQnPnRhZzpibG9nZ2VyLmNvbSwxOTk5OmJsb2ctODkzNTkxMzc0MzEzMzEyNzM3LnBvc3QtMzg2MTY2MzI1ODUzODg1Nzk1NAogIDwvdGhyOmluLXJlcGx5LXRvPgogIDxjb250ZW50PlNhbG1vbiBzd2ltIHVwc3RyZWFtITwvY29udGVudD4KICA8dGl0bGU-U2FsbW9uIHN3aW0gdXBzdHJlYW0hPC90aXRsZT4KICA8dXBkYXRlZD4yMDA5LTEyLTE4VDIwOjA0OjAzWjwvdXBkYXRlZD4KPC9lbnRyeT4KICAgIA==</me:data>

<me:alg>RSA-SHA1</me:alg>

<me:sig>EvGSD2vi8qYcveHnb-rrlok07qnCXjn8YSeCDDXlbhILSabgvNsPpbe76up8w63i2fWHvLKJzeGLKfyHg8ZomQ==</me:sig>

</me:env>

And on the receiving side, you base64_decode to get the original content, you calculate the sha1_digest on that base64 data, and verify the signature. If it works out, you use the resulting data, in this case a Salmon that was hidden inside the magic envelope:

<?xml version="1.0" encoding="utf-8"?><entry xmlns="http://www.w3.org/2005/Atom">

<id>tag:example.com,2009:cmt-0.44775718</id>

<author><name>test@example.com</name><uri>acct:jpanzer@google.com</uri></author>

<thr:in-reply-to ref="tag:blogger.com,1999:blog-893591374313312737.post-3861663258538857954" xmlns:thr="http://purl.org/syndication/thread/1.0">tag:blogger.com,1999:blog-893591374313312737.post-3861663258538857954

</thr:in-reply-to>

<content>Salmon swim upstream!</content>

<title>Salmon swim upstream!</title>

<updated>2009-12-18T20:04:03Z</updated>

<me:provenance xmlns:me="http://salmon-protocol.org/ns/magic-env"><me:data encoding="base64" type="application/atom+xml">PD94bWwgdmVyc2lvbj0nMS4wJyBlbmNvZGluZz0nVVRGLTgnPz4KPGVudHJ5IHhtbG5zPSdodHRwOi8vd3d3LnczLm9yZy8yMDA1L0F0b20nPgogIDxpZD50YWc6ZXhhbXBsZS5jb20sMjAwOTpjbXQtMC40NDc3NTcxODwvaWQ-ICAKICA8YXV0aG9yPjxuYW1lPnRlc3RAZXhhbXBsZS5jb208L25hbWU-PHVyaT5hY2N0OmpwYW56ZXJAZ29vZ2xlLmNvbTwvdXJpPjwvYXV0aG9yPgogIDx0aHI6aW4tcmVwbHktdG8geG1sbnM6dGhyPSdodHRwOi8vcHVybC5vcmcvc3luZGljYXRpb24vdGhyZWFkLzEuMCcKICAgICAgcmVmPSd0YWc6YmxvZ2dlci5jb20sMTk5OTpibG9nLTg5MzU5MTM3NDMxMzMxMjczNy5wb3N0LTM4NjE2NjMyNTg1Mzg4NTc5NTQnPnRhZzpibG9nZ2VyLmNvbSwxOTk5OmJsb2ctODkzNTkxMzc0MzEzMzEyNzM3LnBvc3QtMzg2MTY2MzI1ODUzODg1Nzk1NAogIDwvdGhyOmluLXJlcGx5LXRvPgogIDxjb250ZW50PlNhbG1vbiBzd2ltIHVwc3RyZWFtITwvY29udGVudD4KICA8dGl0bGU-U2FsbW9uIHN3aW0gdXBzdHJlYW0hPC90aXRsZT4KICA8dXBkYXRlZD4yMDA5LTEyLTE4VDIwOjA0OjAzWjwvdXBkYXRlZD4KPC9lbnRyeT4KICAgIA==</me:data><me:alg>RSA-SHA1</me:alg><me:sig>EvGSD2vi8qYcveHnb-rrlok07qnCXjn8YSeCDDXlbhILSabgvNsPpbe76up8w63i2fWHvLKJzeGLKfyHg8ZomQ==</me:sig></me:provenance></entry>

Note that the signature, and the base64 data, is still carried inside a "provenance" element of the salmon for future verification.

This is all fun to describe, but it's even more fun to play with. Take a look at http:/salmon-playground.appspot.com/magicsigdemo to see this in action. When you load it, you'll see that it gives you an error -- it will refuse to sign your salmon until you correct the author URI. This is a feature; the demo checks that the signed-in user matches one of the authors of the salmon, so you need to edit the author/uri field to read "acct:<your email address here>" to make it work.

Next, you'll see the magic envelope appear. You can verify the signature, which sends a request back to the server and replies Yes or No. Or, you can unfold the envelope back into an Atom salmon to read the content. Of course, if you tamper with the salmon first it will neither verify nor unfold properly.

For Salmon-aware processors, there's little reason to use anything but the application/magic-envelope form. For syndication in general, though, it may be necessary to wrap the envelope in an Atom or RSS entry.

The source code for all of this is freely available . If you're interested in all of this, please join the Salmon discussion group.

(Updated 1/19 to include a note about squeezing out whitespace from the b64 encoded data before doing anything important with it, per gffletch's comment.)

(Updated 1/19 to include a note about squeezing out whitespace from the b64 encoded data before doing anything important with it, per gffletch's comment.)

Fork in the Road for Salmon

Happy New Year!

Over the past several weeks, I've been doing a Salmon conference roadshow, talking and listening to people about the protocol and getting feedback.

At IIW, we had a "Magic Security Pixie Dust" Salmon session which kicked around the use cases and challenges in detail. There and elsewhere I got basically two kinds of feedback: (a) The specification, especially the signatures, was too complicated; and (b) the specification, especially the signatures, was not comprehensive enough.

There was a suggestion to drop signatures entirely and just rely on reputation of the salmon generators, who would be on the hook for vouching for the identity of their users. This would simplify the protocol but at the cost of giving it effectively the same security characteristics as email. This was initially attractive and I spent some time playing with what that would look like. Unfortunately, I am pretty sure that it would just make operation more complex and adoption more problematic, and you'd likely need to pre-federate every salmon generator/acceptor pair to get things off the ground.

At another meeting I was fortunate enough to get Ben Laurie in the room, and he suggested a simple mechanism for comprehensive signatures. I've been playing with it and it's looking pretty good. By that I mean that it works, and I think it's implementable even with stone knives and bearskins minimal library support.

And, in parallel, many people have started talking about additional use cases for Salmon, most of which benefit from a simple signing mechanism:

- A personal store of comments, posts, and activities you do around the Web, kept in your online storage mechanism of choice, used for backup, archiving, and search purposes ("what was that conversation I had last week?...")

- Salmon for mentions (@replies to a particular person) which just send a Salmon, not necessarily as a reply to a piece of content, but to a person as a mentionee.

- Structured data with verifiable provenance for things like bids and asks

- Building up and using distributed reputation based on analysis of comments, ratings, reviews, etc. published via Salmon.

- ...etc

Sometimes, the more general problem is easier to solve. After a lot of thinking, talking, and coding, I've come around to believing in the more comprehensive solution.

This doesn't change the outline of Salmon specified at http://salmon-protocol.org. It drops the sign-selected-fields mechanism, and lets you sign the entire Salmon, including any extension data. As an added bonus, it doesn't matter what format you choose, what your character encoding or Infoset serialization is; it'll just work regardless.

Since it is a public key signature mechanism, it does require a public key infrastructure. Fortunately, we have the pieces to build one easily and simply at hand.

I'll blog first about the signature mechanism, and second about the public key mechanism, in the next couple of days.

Subscribe to:

Posts (Atom)

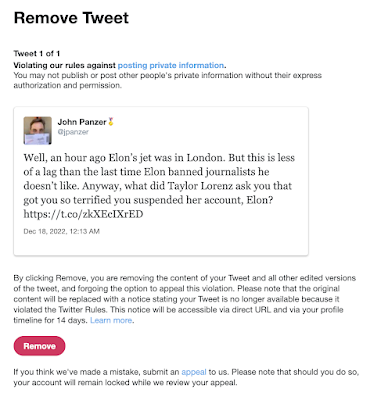

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

We're doing a lot of daily meetings these days. Often they're a waste of time; sometimes they're alifesaver. I think they'...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...