There are tens of millions of RSS and Atom feeds published on the Web. And nearly all of them are copyrighted.

If an author doesn't explicitly give up all rights to a work, which might be a bit tricky,it's automatically copyrighted in the United States and most othercountries. Of course the same is true of web pages. But web pages aremostly intended to be viewed in a browser. Feeds are generallyintended to be syndicated, which means that their content is going tobe sliced and diced in various and unforeseeable ways. This makes adifference.

In what ways is an application allowed to copy and present a given feed's content? To start with, it can do things covered by fair use (*). There are some interesting issues around what exactly fair use means inthe context of web feeds, but ignore those for the moment. What aboutcopying beyond what fair use allows?

It would be awfully helpful if every feed simply included a machine readable license. For example, a <link rel="license"href="..."/> element(http://www1.tools.ietf.org/wg/atompub/draft-snell-atompub-feed-license-00.txt). We could then write code that follows the author's license for things beyond fair use.

Specifically, if a feed author wanted to put their feed content in the public domain, they would simply link to the Creative Commons public domain license which includes the following RDF code:

<rdf:RDF xmlns="http://web.resource.org/cc/" xmlns:rdf="http://www.w3.org/1999/02/22-rdf-syntax-ns#">

<License rdf:about="http://web.resource.org/cc/PublicDomain">

<permits rdf:resource="http://web.resource.org/cc/Reproduction"/>

<permits rdf:resource="http://web.resource.org/cc/Distribution"/>

<permits rdf:resource="http://web.resource.org/cc/DerivativeWorks"/></License></rdf:RDF>

The code here is a machine-readable approximation of "put this in the public domain".

Alternatively, if a feed author just wanted to require attribution,they'd instead use http://creativecommons.org/licenses/by/2.5/. Toallow copying for non-commercial use only, they'd use the popularhttp://creativecommons.org/licenses/by-nc/2.5/. This license meansthat the content must be attributed, and may be freely copied only fornon-commercial uses. Plus, of course, fair uses.

According to the Creative Commons proposed best practice guidelines,the non-commercial license would mean that a web site re-syndicatingthe feed would not in general be able to display advertisements next tothe feed content. Such an application could fall back to fair use onlyfor that feed (perhaps showing only headlines), or it could suppressads for that one feed. The main point is that it would know what itneeded to do.

So, in this perfect world where everything is clearly licenced, I thinklife is fairly simple. Let me know if you think I'm missing something.

In part 2 I intend to return to the messy real world and start complicating things.

--

(*) ...or other applicable national legal codes, since fair use applies only in the U.S. as Paul pointed out.

Tags: Feed Copyright

Subscribe to:

Post Comments (Atom)

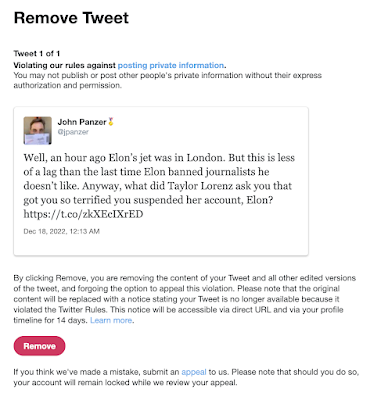

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Just a few things to bear in mind when considering what counts as "high crimes and misdemeanors". Read this list, and, however va...

"[an application] can do things covered by fair use" you say. Yet fair use is a US only concept, so that premise only applies to feeds consumed in the US. IANAL so I don't know how fair use applies to materials from other countries. I would say that making information available through a feed means you expect it to be copied and re-presented, and so I would rephrase the question at the end of the paragraph as "What about copying beyond what you would expect to be done with a feed?"

ReplyDeletePaul -- Yes, please forgive my US-centricism. This is a very simplified, abstracted view of things and trying to keep in mind the various national legal codes would have brought this to a grinding halt. So, I'll add this to the "complications" follow-up. For the time being, to "fair use" above please add "...or other applicable national legal codes".

ReplyDelete