2008/02/28

A modest proposal: RESTful OpenSocial API

Yesterday, I sent a draft proposal for a RESTful API out for comment. It pulls together a lot of the things I've been working on lately: OAuth, batching, and patching. It also has a different take on JSON vs. Atom data formats. Since OpenSocial has several different kinds of data -- activities, people, and app data to start with -- a one-size-fits-all format is likely to be a poor fit for everybody. So, there's a custom mapping, expressed through metadata, that can be different for each kind of data you deal with.

2008/02/11

I'd Rather Batch than Fight

We need HTTP batching. Or at least some people do, in some situations. I think that a small optimization here can avoid re-inventing a lot of wheels.

To back up a bit, the problem I want to solve is just the one of minimizing round trips for REST based services such as AtomPub. Not atomic transactions, nor additional semantics; just avoiding the latency incurred by multiple round trips. The motivation is basically the same as for HTTP Pipelining.

The ideal solution would work for all types of requests (not just Atom, or JSON, or ...). Batching is orthogonal to all of these and with the use of mashups it's quite possible for a client to be using any or all of them. Batching should work really well within browsers but also be usable with any HTTP libraries. And, it should be auto-discoverable, so a client can fall back to individual requests if a server doesn't support batching.

My canonical motivating example is a smart client that is doing an update and then a retrieval of two different but interdependent resources. The client knows that the update is almost certain to trigger a need for a refresh of the second resource, and you need to ensure that the operations are performed in that order due to the dependency. It turns out to be very difficult to model this as a single REST operation, and it's really two logical operations anyway.

Proposal: Take James' multipart-MIME batch proposal, but use POST instead of PATCH. (I agree with Bill de hÓra that PATCH is a stretch; if we had a BATCH method that'd be fine, but we don't right now.) Make the semantics exactly as if the requests had been sent, in order, from the same client on the same keep-alive connection. Do not provide any atomicity guarantees. Provide an easy way for a client to determine if a server supports batching. If a server does not, then a client has a trivial for loop fallback which won't change the semantics of the request at all.

Q: Why drag in MIME? Why not just use XML/JSON/...?

A: Because you don't want to mandate a particular parser, or even a text based format. Image uploads, for example, should work fine with this scheme. It's generic. Note that we are in fact tunnelling HTTP requests here. I'd much rather be up front and open about this than try to hide the fact by smuggling it inside some other syntax.

Q: But MIME is ugly and not supported by my language!

A: We're not trying to interoperate with mail or Usenet here, just slice up a body with a separator that is a sequence of bytes (--batch-34343434) and apply some simple mapping rules for things like method and URL path.

Q: Do we really need this?

A: If you think you do, you can implement it. If you don't, you can skip it. You'll interoperate either way. Let the market decide what's best.

To back up a bit, the problem I want to solve is just the one of minimizing round trips for REST based services such as AtomPub. Not atomic transactions, nor additional semantics; just avoiding the latency incurred by multiple round trips. The motivation is basically the same as for HTTP Pipelining.

The ideal solution would work for all types of requests (not just Atom, or JSON, or ...). Batching is orthogonal to all of these and with the use of mashups it's quite possible for a client to be using any or all of them. Batching should work really well within browsers but also be usable with any HTTP libraries. And, it should be auto-discoverable, so a client can fall back to individual requests if a server doesn't support batching.

My canonical motivating example is a smart client that is doing an update and then a retrieval of two different but interdependent resources. The client knows that the update is almost certain to trigger a need for a refresh of the second resource, and you need to ensure that the operations are performed in that order due to the dependency. It turns out to be very difficult to model this as a single REST operation, and it's really two logical operations anyway.

Proposal: Take James' multipart-MIME batch proposal, but use POST instead of PATCH. (I agree with Bill de hÓra that PATCH is a stretch; if we had a BATCH method that'd be fine, but we don't right now.) Make the semantics exactly as if the requests had been sent, in order, from the same client on the same keep-alive connection. Do not provide any atomicity guarantees. Provide an easy way for a client to determine if a server supports batching. If a server does not, then a client has a trivial for loop fallback which won't change the semantics of the request at all.

Q: Why drag in MIME? Why not just use XML/JSON/...?

A: Because you don't want to mandate a particular parser, or even a text based format. Image uploads, for example, should work fine with this scheme. It's generic. Note that we are in fact tunnelling HTTP requests here. I'd much rather be up front and open about this than try to hide the fact by smuggling it inside some other syntax.

Q: But MIME is ugly and not supported by my language!

A: We're not trying to interoperate with mail or Usenet here, just slice up a body with a separator that is a sequence of bytes (--batch-34343434) and apply some simple mapping rules for things like method and URL path.

Q: Do we really need this?

A: If you think you do, you can implement it. If you don't, you can skip it. You'll interoperate either way. Let the market decide what's best.

2008/02/08

Happiness

At 4:27pm today, while walking across the lawn at work, feeling the last rays of warm amber sunlight, and listening to a small fountain: Happy. Nothing big to feel happy about, really, other than finally feeling better after six days of maybe-a-flu, and my dog is going to be okay after his surgery last week, and it's nearly the weekend and I'll be spending most of it hanging out with my three year old son.

Maybe it's possible to be happy over nothing big, given the right frame of mind. That's a good frame to keep handy.

Oh, and the feed for this blog should now be fixed. (The DNS hijinks last weekend left it misconfigured.) Happily, I'd been too sick to post much anyway...

Maybe it's possible to be happy over nothing big, given the right frame of mind. That's a good frame to keep handy.

Oh, and the feed for this blog should now be fixed. (The DNS hijinks last weekend left it misconfigured.) Happily, I'd been too sick to post much anyway...

2008/02/07

OpenID Foundation += {Google, IBM, Microsoft, Verisign, Yahoo}

Much coverage here. The OpenID Foundation is an interesting entity, not responsible for technical work but responsible for protecting the brand and the IP associated with OpenID. It's awesome that DeWitt is the corporate representative for Google.

Update 2/10: Added the missing IBM, and alphabetized.

Update 2/10: Added the missing IBM, and alphabetized.

2008/02/04

SG Foo Camp Reflections

SG Foo has come and gone in a very high-energy weekend. Thank you Scott and David (and the folks at O'Reilly) for putting it together. Unfortunately I was fighting off a cold nearly the whole time, which meant I missed the games of Werewolf...

What happened? We planted some seeds. We'll have to wait and see if they germinate. There was a lot of discussion about about XMPP and PubSub as a key protocol for pushing real-time and near-real-time social network data. I want to investigate this more but the XMPP standard is forbidding... Joseph Smarr and I organized a spirited discussion about how to have social data portability policies and avoid social data DRM. We made some progress around OAuth and OpenID. I met Dare Obasanjo. Data Portability was discussed at length. I learned some interesting things about publishing from Teresa Nielsen Hayden. I discovered that Sebastopol is a town full of very nice people.

What happened? We planted some seeds. We'll have to wait and see if they germinate. There was a lot of discussion about about XMPP and PubSub as a key protocol for pushing real-time and near-real-time social network data. I want to investigate this more but the XMPP standard is forbidding... Joseph Smarr and I organized a spirited discussion about how to have social data portability policies and avoid social data DRM. We made some progress around OAuth and OpenID. I met Dare Obasanjo. Data Portability was discussed at length. I learned some interesting things about publishing from Teresa Nielsen Hayden. I discovered that Sebastopol is a town full of very nice people.

I Fought the DNS, and the DNS Won

Ugh. Over the weekend, the DNS provider for abstractioneer.org helpfully upgraded their software... and the new code refused to let me make abstractioneer.org be a CNAME for ghs.google.com, claiming it's illegal. (No, it's not, it just keeps you from using mail to that domain and a couple of other things, none of which I care about.)

All of which means that (a) abstractioneer.org is now www.abstractioneer.org; (b) my OpenID has now changed, with the old one (abstractioneer.org) doing a 302 redirect to the new one (www.abstractioneer.org). I'm viewing this as an experiment; let's see what breaks.

All of which means that (a) abstractioneer.org is now www.abstractioneer.org; (b) my OpenID has now changed, with the old one (abstractioneer.org) doing a 302 redirect to the new one (www.abstractioneer.org). I'm viewing this as an experiment; let's see what breaks.

2008/02/01

The Unbearable Lightness of the Social Graph API

Cool. Looks like we've announced Brad's Social Graph API! Looking forward to talking with people at Social Graph Foo Camp about this. One note... one of the pieces of public data which the API leverages is OpenID delegate links. So a user can prove ownership of an entire set of appropriately-linked Social Graph nodes.

The best place to play with the API (and see what data it's returning) is with the Playground tool.

The best place to play with the API (and see what data it's returning) is with the Playground tool.

Subscribe to:

Comments (Atom)

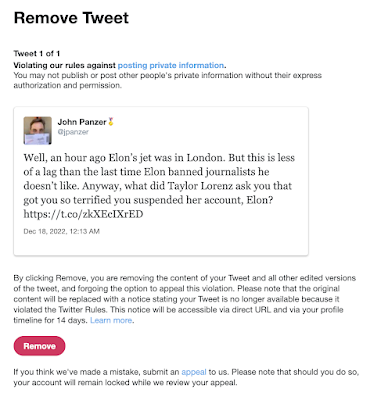

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Just a few things to bear in mind when considering what counts as "high crimes and misdemeanors". Read this list, and, however va...