We need HTTP batching. Or at least some people do, in some situations. I think that a small optimization here can avoid re-inventing a lot of wheels.

To back up a bit, the problem I want to solve is just the one of minimizing round trips for REST based services such as AtomPub. Not atomic transactions, nor additional semantics; just avoiding the latency incurred by multiple round trips. The motivation is basically the same as for HTTP Pipelining.

The ideal solution would work for all types of requests (not just Atom, or JSON, or ...). Batching is orthogonal to all of these and with the use of mashups it's quite possible for a client to be using any or all of them. Batching should work really well within browsers but also be usable with any HTTP libraries. And, it should be auto-discoverable, so a client can fall back to individual requests if a server doesn't support batching.

My canonical motivating example is a smart client that is doing an update and then a retrieval of two different but interdependent resources. The client knows that the update is almost certain to trigger a need for a refresh of the second resource, and you need to ensure that the operations are performed in that order due to the dependency. It turns out to be very difficult to model this as a single REST operation, and it's really two logical operations anyway.

Proposal: Take James' multipart-MIME batch proposal, but use POST instead of PATCH. (I agree with Bill de hÓra that PATCH is a stretch; if we had a BATCH method that'd be fine, but we don't right now.) Make the semantics exactly as if the requests had been sent, in order, from the same client on the same keep-alive connection. Do not provide any atomicity guarantees. Provide an easy way for a client to determine if a server supports batching. If a server does not, then a client has a trivial for loop fallback which won't change the semantics of the request at all.

Q: Why drag in MIME? Why not just use XML/JSON/...?

A: Because you don't want to mandate a particular parser, or even a text based format. Image uploads, for example, should work fine with this scheme. It's generic. Note that we are in fact tunnelling HTTP requests here. I'd much rather be up front and open about this than try to hide the fact by smuggling it inside some other syntax.

Q: But MIME is ugly and not supported by my language!

A: We're not trying to interoperate with mail or Usenet here, just slice up a body with a separator that is a sequence of bytes (--batch-34343434) and apply some simple mapping rules for things like method and URL path.

Q: Do we really need this?

A: If you think you do, you can implement it. If you don't, you can skip it. You'll interoperate either way. Let the market decide what's best.

Subscribe to:

Post Comments (Atom)

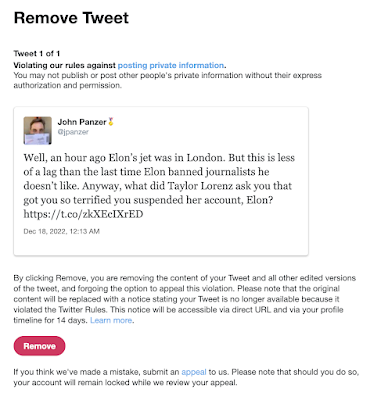

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Just a few things to bear in mind when considering what counts as "high crimes and misdemeanors". Read this list, and, however va...

Hi,

ReplyDeleteDidn't quite follow how this batching method is better than pipelining - which is much more wildly supported. We use more BW with pipeline due to the HTTP, but we use a lot less CPU on both sides (client/server) since we don't need to parse.. What's the point then?

Lior

@Liorsion -- pipelining only allows GET and HEAD, eliminating the most compelling use case. (Read only batching can already be achieved by defining an appropriate read-only feed and retrieving that.) Also, it's not turned on out of the box in FF or IE so it's effectively not available to browser-based clients.

ReplyDeleteJohn,

ReplyDeleteThat's true, BUT you're talking about defining something new anyway - and hoping people adapt. It makes more sense (to me) to extend something known other than creating something further from what we have - that is, it's easier to extend pipelining than create multiparting batch requests, IMHO.

Lior

The biggest difference is in the case of browsers hosting AJAX apps. A pipelining extension requires new browsers, something that takes years. An agreement on an HTTP based protocol however can be deployed in a matter of weeks.

ReplyDelete