Doc Searls just changed his blog license to Attribution-NonCommercial-ShareAlike 2.5... in order to clearly deny splogs reblogging rights to his content. Interesting, though I think there may be some unintended fallout. But there are some cool applications for this. What if someone built a tool to make it easy to find such copy right violators (academics use these tools to find plagarism)? With an accompanying service to aggregate complaints and, when they reach a sufficiently remunerative level, send attack lawyers after sploggers.

Update: The collective intelligence of the blogosphere is a mighty thing. In a comment below, Doc points at an open source plagarism detector from UCSB (my alma mater) that already does Internet searches. Hmmm....

Subscribe to:

Post Comments (Atom)

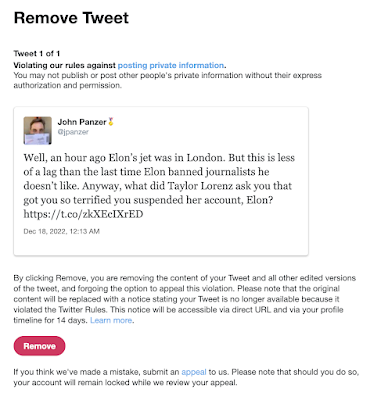

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Last night Rachel Maddow talked about an apparently fake NSA document "leaked" to her organization. There's a lot of info t...

Hey, as coincidence has it I'm hanging here at UCSB, where we have a plagarism-nailing system called PAIRwise, which is open source. Cool, no?

ReplyDeleteSo I just blogged about it, here: http://doc.weblogs.com/2006/08/31#fightingSplogerism

My blog has been licensed with the CC BY-NC-SA 2.5 for a while now, and sploggers repost my content all the time. It hasn't hurt my position in SERPs, so I'm not really too worried about it.

ReplyDeleteAttack lawyers is the wrong idea. Search companies should use the CC license RDF along with some kind of authority measure to mechanically identify splogs to remove them from their indexes, etc. This would make splogs less valuable to create, which might curtail their growth.

The reason why I mention the authority measure is because, what happens when a splogger reposts your CC licensed content and put their own CC license on it? It becomes a Mexican standoff between the two sources ... which would render the technique unusable for search companies. But then, if we had that authority measure ... we wouldn't need the CC license information, anyway.

Oh well.

Dossy -- Here's a thought: Create a registration service that accepts regular weblog pings (like Technorati or Pingomatic). It just records URLs, licenses, content hashes, and most importantly date/timestamps. Then anyone can go to the service to ask which URL was "first" -- which ends the Mexican Standoff: You can't copy something from the future.

ReplyDeleteJohn ... now you've traded trust for a race condition. Imagine the ping service is overloaded or down or there's network issues -- the original author's ping doesn't make it through. Later, a splogger picks up the content and goes for the ping and gets it first.

ReplyDeleteGame over.

Dossy -- Remember that there are always server logs and other evidence. But one could also offer a signing service -- pass every post through the service before posting, which includes its own timestamp and digitally signs the whole thing. The downside is that if the service is down, you can't post. There are lots of ways to skin this cat.

ReplyDelete