Software engineering's preoccupation is the arrangement of bits, as opposed to atoms. One of the properties of bit arrangements is that their marginalmanufacturing cost is zero; once you have an arrangement of bits, youcan make as many exact copies of that arrangement as necessary,whenever and wherever they're needed. By contrast, an arrangement ofatoms such as a bridge has a large marginal manufacturing cost, even ifyou just want an exact copy. Further, there are few physical limits tobits, while there are sharp physical limits to atoms. The only reallimit to bit arrangement is the human brain, and economics (how badlypeople want bits arranged in particular ways).

These are the fundamental reasons why nearly every software engineering project is attempting new design, and is thus hard. This is because, in the world of software, design equals bitarrangements and copying a prior bit arrangement has zero cost. Finding an appropriate bit arrangement used to have substantial cost,but that cost is falling towards zero too. So for a given project, youcan assume that competent software engineers have mostly found andcopied the relevant patterns of bits where possible, and the remainingwork is design.

Think about what the statement above means. This isn't like a civilengineer dealing with slightly differing terrain or traffic loads whenadapting an existing design for a new bridge; it's more like a civilengineer being asked to build a bridge out of Jello on Pluto. And thenext time, to build atop a moving lava flow on Mercury. In otherwords, with the easy, mechanical adaptations being taken care of bythose ubiquitous bit patterns, the problems that are left for people towork out are the hard, surprising, novel ones. Usually with nonlinearAnd in software, design really is everything; once you've taken designto a detailed enough level that the implementation is mechanical... welet the machines do it.

Which is why I winced when I read Scott Rosenberg's interview in Salon. He gets it exactly right when he notes that there's always somethingnew in every software project, otherwise there'd be no point in doingit. But he goes off the rails when he says, "...programmers areprogrammers because they like to code -- given a choicebetween learning someone else's code and just sitting down and writingtheir own, they will always do the latter." Jonathan Rentzsch hasalready skeweredthis statement better than I could. It is of course true that thereare some people who just aren't good at finding prior solutions, or atunderstanding them once found, and they may contribute to unnecessaryre-creation of software, increasing both cost and risk to largerprojects. But they're not the norm, and aren't a major cause of the"always something new" phenomenon. The essence of software developmentis new design.

This is also why attempts to map manufacturing based activities tosoftware development are at best rough approximations and at worstdangerous distractions. Software development is a knowledgeacquisition activity, not a manufacturing activity.

Subscribe to:

Post Comments (Atom)

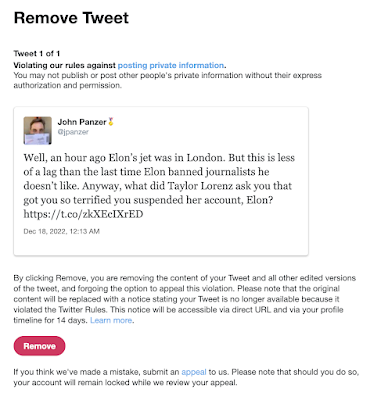

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Last night Rachel Maddow talked about an apparently fake NSA document "leaked" to her organization. There's a lot of info t...

I left a comment about your post on Joe Gregorio's blog, but I thought it was a little passive-aggressive to just leave a critique over there, so I've excerpted it here:

ReplyDelete<blockquote><a href="http://bitworking.org/news/113/Knowledge-Acquisition#X3">I really like the idea of programming as knowledge acquisition. But the bit about "unnecessary re-creation of software" seems to contradict his whole piece and smacks of a little elitism if you ask me.</a></blockquote>

It's seems there might be some more nuance to "unnecessary" than you're letting on. As it stands, it seems in direct contradiction to the title of your post. What is unnecessary if done for the holy pursuit of knowledge acquisition?

I suppose whether a particular re-creation is necessary depends on whether you're an 'engineer' or a 'scientist'. I was talking from the 'engineering' point of view, where the primary goal is to solve a problem. Here knowledge acquisition is just a prerequisite and unnecessary re-creation doesn't advance your goal. If you are being a 'scientist' and your primary goal is to acquire knowledge, then re-creation is fine and actually is a foundation of the scientific method. Sometimes you have to go back and forth between the two roles of course.

ReplyDeletePerhaps I misinterpreted Scott Rosenberg and he was really saying that the big problem is that programmers behave like scientists, when their projects need them to behave like engineers. The root problem then is not competence but a conflict of interest. I just don't think this is a key problem. That is, it's a real issue, but I don't see it as the key reason why software projects take so long or fail so often. I actually see the reverse problem a lot more (engineers trying to get a quick solution and not recognizing when it's time to step back and put on a scientist hat for a while).